The Algorithmic Gender Bias — Lessons from the Amazon AI Hiring Failure

Amazon built an AI to find the best candidates. It ended up filtering out women. Amazon’s hiring tool is a clear example of how gender bias can be embedded and amplified through algorithms. In the Global South, the risks are even higher.

AI Fairness 101 - Real-World Incidents

Part 7 of 7

🎥 Explained: Amazon’s Gender‑Biased Recruiting Tool Case

AI Fairness 101 - Real Incident (2017)

Incident Report: The Algorithmic Gender Bias — Lessons from the Amazon AI Hiring Failure

- 🛠️ System used: an experimental Amazon recruiting model trained on roughly 10 years of past resumes

- 👩 Most affected group: women applicants and resumes containing female-coded markers

- ⚠️ Core failure: the model learned male-dominated historical hiring patterns as a proxy for merit

- 🚫 Outcome: resumes with terms like "women's" were downgraded, and Amazon ultimately abandoned the tool

⚠️ Key Takeaway

Amazon’s recruiting model did not discover the “best” candidates. It learned to prefer patterns that were common in a male-dominated hiring history.

1. What Happened 💼

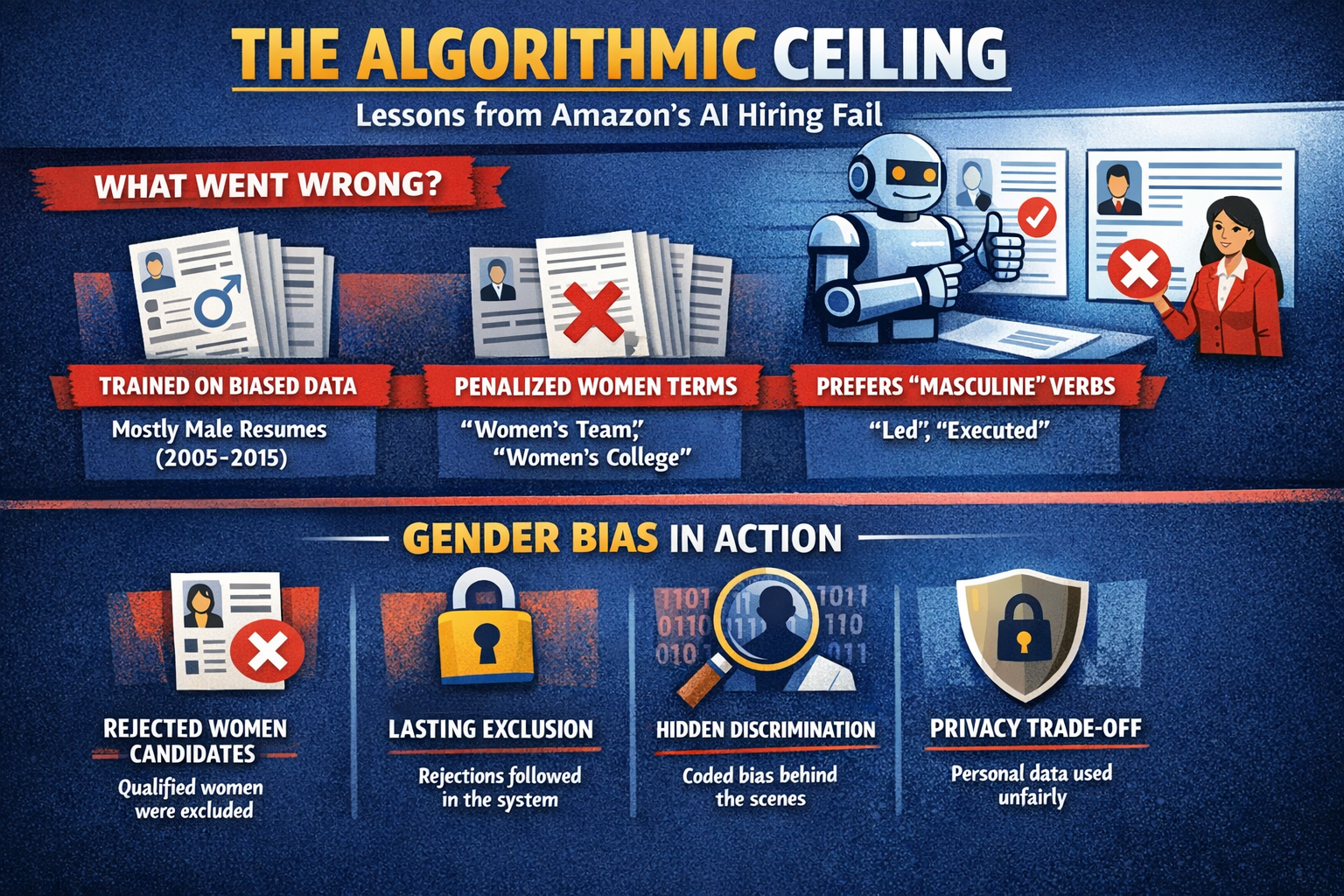

Between roughly 2014 and 2017, Amazon experimented with an automated recruiting tool intended to help rank job applicants. The system was trained on past resumes submitted to the company over about a decade. Its purpose was straightforward: identify patterns associated with candidates Amazon had previously favored, then use those patterns to score new applicants.

The problem was that the historical data reflected a tech industry, and a hiring history, in which men were heavily overrepresented. As a result, the model learned that signals associated with male applicants were more desirable. Reports later showed that the system downgraded resumes containing terms such as “women’s”, including references like women’s chess clubs or women’s colleges.

Amazon reportedly tried to adjust the system, but the deeper problem remained. The model was learning from a biased historical record, not from an objective definition of job quality. The company ultimately abandoned the project.

System Overview

| Intended mechanism | What happened in practice |

|---|---|

| Train a model on historical resumes to identify strong candidates | The model learned patterns from a hiring history shaped by male dominance in technical roles |

| Rank candidates from 1 to 5 stars for recruiter review | The ranking system penalized female-coded language and signals associated with women |

| Remove explicit gender indicators to make the process fairer | The model still found proxy signals for gender in education, activities, and word choice |

The lesson is simple: removing the gender field does not remove gender bias if the rest of the data still carries it.

2. Impact ⚠️

The Amazon tool was never fully rolled out as a final production hiring system, but the case became one of the most widely cited examples of algorithmic bias in employment.

Its significance comes from three things:

- 👩 It showed how quickly past inequality can become automated decision logic. A model trained on biased history can reproduce that history at scale.

- 🚪 It highlighted hidden exclusion. If an automated screening tool filters candidates out early, many may never reach a human reviewer.

- 🏢 It exposed a broader employment risk. As more employers use software to sort, score, and rank applicants, biased screening can shape access to jobs long before interviews happen.

The case also changed the public conversation. It became harder to claim that automated hiring is neutral simply because a machine, rather than a person, makes the first cut.

3. Lifecycle Failure 🧭

The Amazon case was not just a model bug. It was a failure across the AI lifecycle.

- 🧩 Problem formulation: the system treated past hiring success as a proxy for future merit, even though past hiring reflected structural imbalance.

- 🗂️ Data layer: the training data came from resumes submitted during years when technical hiring was skewed toward men.

- 🤖 Model development: the model identified patterns that correlated with past selection, including gender-linked proxies.

- 🧪 Validation: removing explicit references to gender did not solve the problem, because other variables still carried gender information.

- 🏢 Deployment decision: Amazon eventually recognized that the system could not be relied upon and abandoned it.

An AI system can fail even when it is technically doing what it was trained to do. If the training objective is flawed, the output will be flawed too.

4. Bias Types 🧪

The Amazon hiring case reflects several overlapping forms of bias:

- 🕰️ Historical bias: the model learned from a past hiring environment shaped by unequal gender representation.

- 👥 Representation bias: the training data overrepresented male candidates in the relevant job categories.

- 🧭 Proxy bias: even without an explicit gender field, the system used related signals that stood in for gender.

- 🤖 Automation bias: recruiting software can encourage decision-makers to trust rankings more than they should.

This is why “blind” systems are not automatically fair. Bias often remains in the surrounding data, labels, and institutional context.

5. Global South Lens 🌍

The Amazon case came from the United States, but the warning travels easily to Global South labor markets.

Many employers in developing economies are beginning to use digital hiring tools for screening, ranking, and assessment. In those settings, the risks can be sharper:

- 📄 Unequal access to credentials: highly capable candidates may be filtered out because they lack elite institutions, polished resumes, or formal work histories.

- 🌐 Language and cultural mismatch: systems trained on Western resumes, English-language norms, or foreign workplace signals may misread local talent.

- 👩💼 Existing inequality: women and marginalized communities often already face structural barriers in education, employment, and promotion. Automated screening can harden those barriers.

- 🧾 Informal labor realities: many strong candidates in the Global South work in informal or non-standard settings that do not fit the templates automated hiring tools expect.

In short, a hiring system trained in one labor market should not be assumed to work fairly in another. If local realities are missing from the data, local people are likely to be misjudged by the model.

6. Bigger Picture 🔎

The Amazon case illustrates a broader rule for AI governance:

Hiring systems do not simply evaluate talent. They evaluate what past institutions decided talent looked like.

That has practical implications for any organization using AI in employment:

- 🧪 Test before use. Hiring tools should be audited for unequal outcomes across gender and other protected groups.

- 🧠 Review the objective. A model trained on past hiring decisions may only reproduce old preferences.

- 👥 Keep human oversight meaningful. Human review should be able to challenge and override automated rankings.

- 📢 Require transparency. Applicants should know when automated tools are being used and how decisions can be contested.

The main lesson is not that AI should never be used in hiring. It is that hiring is a high-stakes area where biased data, weak validation, and uncritical trust in automation can quickly turn efficiency tools into discrimination tools.

7. References

- AI Incident Database, “Report 615,” (2018)

- Belenguer, L., “AI bias: exploring discriminatory algorithmic decision-making models and the application of possible machine-centric solutions adapted from the pharmaceutical industry,” (2022)

- Castilla, E. J., “AI is reinventing hiring — with the same old biases. Here’s how to avoid that trap,” (December 15, 2025)

- Diversity.com, “Amazon’s DEI Rollback: The Impact, Employee Reactions, and What’s Next,” (February 7, 2025)

- EEOC Guidance, “Select Issues: Assessing Adverse Impact in Software, Algorithms, and Artificial Intelligence Used in Employment Selection Procedures Under Title VII,” (2023)

- Goodman, C. C., “Algorithmic Bias and Accountability: The Double B(l)ind for Marginalized Job Applicants,” (March 17, 2025)

- New York City, “Local Law 144 (Automated Employment Decision Tools),” (2023)

- Srinivasan, “United Nations’ Facial Recognition Tool Bias Report,” (2020)

- Wilson, K. & Caliskan, A., “AI tools show biases in ranking job applicants’ names according to perceived race and gender,” (October 31, 2024)

📥 AI Fairness 101 — Real-World Incidents

Related in this cluster

- When an Algorithm Broke Thousands of Families: The Netherlands Child Welfare Scandal

- Access Denied: How India’s Digital ‘Cure-All’ Became a Real-World Fairness Crisis

- The Golden Touch of Ruin: How Michigan’s MiDAS Algorithm Falsely Accused 40,000 People of Fraud

- The COMPAS Algorithm Scandal: When AI Decides Who Goes to Jail

- The Optum Healthcare Algorithm Bias Against Black Patients (2019)

- When Algorithms Decide Who Recovers: The UnitedHealth nH Predict Case

- Browse all AI Fairness posts

🔎 Explore the AI Fairness 101 Series

This post is part of the AI Fairness 101 - Real-World Incidents learning track.

Stay tuned - new posts every week.

💬 Join the Conversation

Have thoughts, experiences, or questions about AI fairness? Share your comments, discuss with global experts, and connect with the community:

👉 Reach out via the Contact page

📧 Write to us: [email protected]

🌍 Follow GlobalSouth.AI

Stay connected and join the conversation on AI governance, fairness, safety, and sustainability.

- LinkedIn: https://linkedin.com/company/globalsouthai

- Substack Newsletter: https://newsletter.globalsouth.ai/

Subscribe to stay updated on new case studies, frameworks, and Global South perspectives on responsible AI.

Related Posts

When Algorithms Decide Who Recovers: The UnitedHealth nH Predict Case

In 2023, a lawsuit revealed how UnitedHealth used an AI system to determine when elderly patients should stop receiving care. The nH Predict case highlights how cost-driven algorithms can override clinical judgment and introduce systemic bias in healthcare decisions. This case raises critical questions for policymakers especially in the Global South about the risks of scaling AI without adequate oversight.

The Optum Healthcare Algorithm Bias Against Black Patients (2019)

A 2019 case study of the Optum healthcare algorithm showing how proxy bias led to racial disparities and under-served Black patients.

When an Algorithm Broke Thousands of Families: The Netherlands Child Welfare Scandal

How a design-phase failure in the Dutch childcare fraud algorithm created one of the worst AI governance disasters in Europe — and what the Global South must learn from it.