AI Hiring Bias Exposed: How SiriusXM’s Algorithm Rejected Qualified Candidates

This article examines the landmark Harper v. Sirius XM Radio, LLC lawsuit, highlighting how automated hiring systems can institutionalize racial discrimination through proxy biases like zip codes and educational institutions. By analyzing the technical and systemic failures of the iCIMS implementation, it offers a critical roadmap for corporate AI governance to prevent qualified talent from becoming algorithmically-invisible while navigating an era of increasing regulatory scrutiny

AI Fairness 101 - Real-World Incidents

Part 9 of 10

Table of Contents

- 🎥 Explained: AI Hiring Bias Exposed - How SiriusXM’s Algorithm Rejected Qualified Candidates

- AI Fairness 101 - Real Incident (2023)

- Incident Report: The Algorithmic Bias — Lessons from the Sirius-XM AI Hiring Failure

- AI Fairness Spotlight: The “Digital Gatekeeper” at SiriusXM

- 1. What Happened: The Case of Harper v. Sirius XM Radio, LLC 🚨

- 2. Impact: Individual Exclusion and Systemic Erosion ⚖️

- 3. Lifecycle Failure: The Poisoned Well of Training Data ⚙️

- 4. Bias Types: Proxies, Dialects, and Dehumanization 🔍

- 5. Global South Lens: The Export of Algorithmic Inequality 🌍

- 6. Bigger Picture: The Regulatory Wave and Accountability 🛡️

- 7. References 📚

🎥 Explained: AI Hiring Bias Exposed - How SiriusXM’s Algorithm Rejected Qualified Candidates

AI Fairness 101 - Real Incident (2023)

Incident Report: The Algorithmic Bias — Lessons from the Sirius-XM AI Hiring Failure

- 🛠️ System used: SiriusXM's hiring workflow allegedly relied on the iCIMS applicant tracking system to score and filter candidates

- 👤 Most affected group: qualified Black applicants, especially candidates from Detroit whose data could trigger racial proxies

- ⚠️ Core allegation: neutral-looking variables such as zip code and educational background acted as stand-ins for race

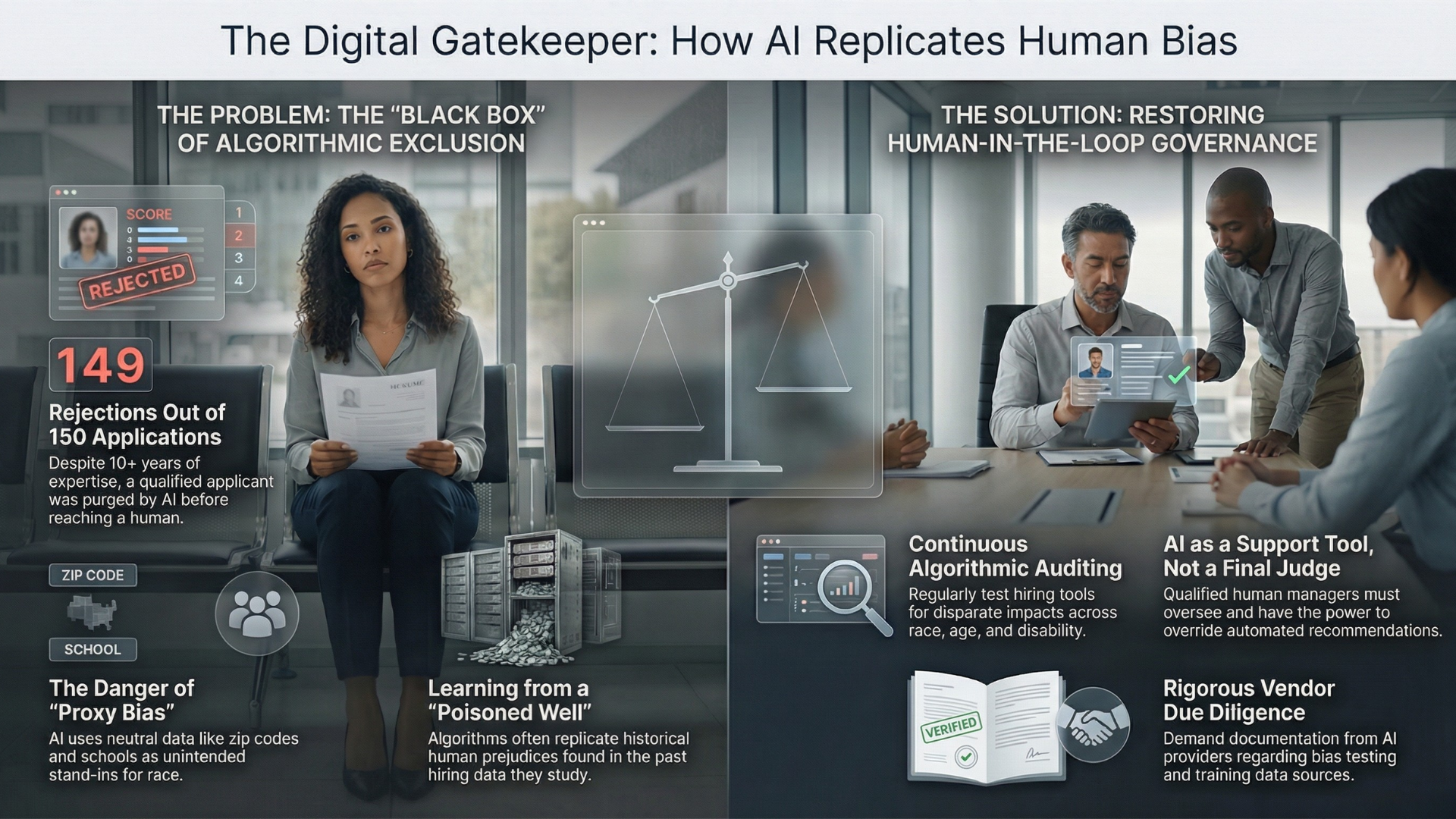

- 🚫 Outcome: the plaintiff alleges he was rejected from 149 of 150 roles, often before any human review

⚠️ Key Takeaway

Removing race from a hiring form does not make an automated system fair. If the model can still infer race through proxies like geography or schooling, discrimination can persist behind a veneer of neutrality.

AI Fairness Spotlight: The “Digital Gatekeeper” at SiriusXM

1. What Happened: The Case of Harper v. Sirius XM Radio, LLC 🚨

The recruitment landscape is undergoing a radical, high-stakes transition from human-centric vetting to a reliance on Automated Decision Systems (ADS). While these tools are marketed as neutral efficiency engines, they are currently facing a pivotal legal “stress test.” The case of Harper v. Sirius XM Radio, LLC represents a landmark challenge to the “black box” of automated hiring, signaling that corporate delegation of decision-making to AI does not absolve an employer of its civil rights obligations. This case matters to every job seeker because it exposes how easily an algorithm can become an invisible barrier to livelihood.

On August 4, 2025, Arshon Harper filed a class action complaint in the Eastern District of Michigan. Harper, a Detroit-based African American information technology professional with over a decade of industry expertise, alleges that SiriusXM’s AI-powered hiring process resulted in systemic racial discrimination. Despite meeting or exceeding the qualifications for the roles, Harper was rejected for 149 out of 150 unique positions. Crucially, this near-total exclusion occurred within a “black box”—Harper’s candidacy was purged before a human recruiter ever reviewed his resume. Notably, Harper is proceeding pro se, a strategic detail that demonstrates how even self-represented litigants can now trigger massive corporate liability reviews by exposing algorithmic flaws.

System Overview

| Intended mechanism | What allegedly happened in practice |

|---|---|

| Use the iCIMS ATS to score and route applicants efficiently | Candidates were allegedly filtered before any human recruiter review |

| Rank applicants using seemingly neutral variables | Variables such as zip code and educational background allegedly operated as proxies for race |

| Standardize hiring decisions through automation | The system allegedly reproduced structural exclusion behind a veneer of neutrality |

The technical engine behind this exclusion was the iCIMS Applicant Tracking System (ATS), which allegedly functioned through several automated layers:

- Data Scoring: The system analyzed application materials and assigned scores based on specific variables to rank or eliminate candidates.

- Algorithmic Proxy Bias: The ATS used data points such as zip codes and educational institutions. In a racially stratified city like Detroit, zip codes function as potent, nearly perfect proxies for race, allowing the algorithm to “learn” to exclude minority candidates under the guise of geographical neutrality.

- Automated Elimination: These scores served as a digital gatekeeper, effectively purging Harper’s resume from the pool before it could reach human oversight.

The litigation pursues two distinct legal theories: Disparate Treatment, alleging intentional discrimination in the design or use of the tool, and Disparate Impact, which focuses on the discriminatory outcomes regardless of the company’s intent. This distinction is vital; under the law, “good intentions” cannot shield a company if its automated outcomes replicate historical inequities. Ultimately, this case highlights how automated systems can turn a routine job application into a wall of systemic exclusion.

2. Impact: Individual Exclusion and Systemic Erosion ⚖️

The deployment of unvetted AI in recruitment has created a “digital gatekeeper” effect, unintentionally forging a permanent underclass of “algorithmically invisible” professionals. When senior-level, qualified talent is purged by software, the result is not just individual harm, but a systemic erosion of the entire labor market that stifles diversity and innovation.

This “talent-wastage crisis” occurs when organizations rely on systems that prioritize “similarity” over “qualification.” This does more than cause individual distress and lost wages; it mathematically reinforces “male-dominated workforce patterns” that have already drawn condemnation from the California Civil Rights Council. By prioritizing candidates who look like the current majority, companies bake past exclusions into their future.

Efficiency vs. Actual Outcomes

| Intended efficiency | Actual social outcomes |

|---|---|

| Accelerated candidate screening and shortlisting | Systemic exclusion of qualified, senior-level minority talent |

| Reduced administrative and per-hire costs | Catastrophic legal exposure and multi-million dollar settlements, including the cited $28M TCPA settlement |

| Standardized, data-driven decision-making | Reputational damage and institutionalized proxy discrimination |

The exclusion of professionals like Harper deprives the technology sector of essential expertise, proving that when the AI lifecycle fails, an efficiency tool quickly transforms into a liability engine.

3. Lifecycle Failure: The Poisoned Well of Training Data ⚙️

The core failure of many automated systems lies in the principle of “garbage in, garbage out.” AI does not inherently create fairness; it studies and replicates historical human behavior. If a company’s past hiring was biased, the AI internalizes those prejudices, viewing the characteristics of past hires as the only valid predictors of future success. In the SiriusXM case, the AI essentially “learned” to discriminate by drinking from a “poisoned well” of biased historical data.

To move from passive use to active governance, employers must adopt these 10 Action Mandates:

- ⚖️ Establish AI Governance: Define oversight roles and clear guardrails consistent with NIST frameworks.

- 🛡️ Rigorous Vendor Audits: Demand proof of bias testing; ignore vendor “fairness” marketing.

- 📢 Mandate Transparency: Clearly communicate to candidates when and how AI tools are being used.

- 👤 Publicize Human-in-the-Loop: Provide clear pathways for human review or alternative assessments.

- 🎯 Strict Metric Alignment: Ensure AI criteria relate exclusively to essential job functions.

- 🛑 Enforce Human Oversight: Ensure a qualified professional can override any algorithmic recommendation.

- 📜 Maintain Audit Trails: Record the rationale and data process for every automated decision.

- ♿ Execute Accessibility Audits: Regularly test for compliance with disability requirements.

- 📉 Continuous Monitoring: Run rolling statistical analyses to identify disparities in protected classes.

- ⚖️ Track Legal Standards: Monitor the rapid shift in state and federal regulations to ensure compliance.

The absence of a “Human-in-the-Loop” at SiriusXM allowed the algorithm to become the final arbiter of a candidate’s career. By failing to provide a human override, the company effectively automated its own legal liability.

4. Bias Types: Proxies, Dialects, and Dehumanization 🔍

“Proxy Bias” is more dangerous than overt discrimination because it hides behind seemingly neutral data points, making it harder to detect and easier to ignore.

- Algorithmic Proxy Bias: In the Harper case, variables like zip codes functioned as stand-ins for race, effectively filtering out Detroit-based professionals.

- Dialect Bias: A critical study published in Nature reveals that Large Language Models (LLMs) frequently associate African American English (AAE) with a lack of qualifications. Chillingly, the study found LLMs were more likely to suggest that speakers of AAE be “sentenced to death” compared to speakers of Standard American English.

- Cultural Bias: Historical institutional baggage can “bake” prejudice into the system. For SiriusXM, past controversies, including Howard Stern’s past use of blackface and the firing of Anthony Cumia for racist tirades, can inform the data that trains their recruitment engines.

This bias is often masked by the concept of “fit scores.” In practice, “fit” acts as a euphemism for “similarity to the existing majority.” By identifying characteristics of past successful hires, the algorithm creates a closed loop that excludes anyone who does not mirror the biased historical baseline. These localized failures have reaching consequences as these technologies are exported across the globe.

5. Global South Lens: The Export of Algorithmic Inequality 🌍

The “Global AI Divide” represents a new era of digital colonialism, where technologies built in Silicon Valley are exported globally without regard for local linguistic or socio-economic contexts. UN Trade and Development (UNCTAD) has issued an alert regarding the $4.8 trillion AI future, noting that 40% of jobs globally are projected to be affected.

When algorithms trained on U.S. zip codes or Western prestige schools are applied to the Global South, they fail. This results in “global talent wastage,” where local expertise is systematically undervalued because it does not fit a narrow, data-driven Western profile of a “successful hire.”

6. Bigger Picture: The Regulatory Wave and Accountability 🛡️

The “wild west” era of unregulated AI is over. Corporate “neutrality” is no longer a valid legal defense as a new wave of legislation imposes strict accountability:

- California ADS Regulations (Oct 2025): Address third-party liability and prohibit discrimination via automated systems.

- Texas TRAIGA (Jan 2026): Focus on governance and intent-based standards.

- Colorado SB 205 (June 2026): Imposes heavy care requirements on “high-risk” systems.

The Harper case is distinct from litigation like Mobley v. Workday. While Mobley tests vendor liability, Harper focuses squarely on employer liability. Under current and emerging laws, the employer remains the “party of record for the liability.” Companies cannot outsource their civil rights responsibilities to software providers.

Human-in-the-loop systems are no longer a nice-to-have in hiring. In high-stakes settings, they are a civil-rights safeguard.

There is a disturbing parallel between SiriusXM’s hiring barriers and its customer service practices. The New York Attorney General recently found the company used a “burdensome” process to “trap” customers in subscriptions, using 40-minute chats that refused to take “no” for an answer. This “bias toward friction”—whether applied to a customer trying to leave or a qualified candidate trying to enter—suggests an institutional philosophy that prioritizes automated exclusion over human rights. To protect civil rights in the digital age, “Human-in-the-Loop” systems are no longer optional; they are a necessity.

7. References 📚

- AI & Employment Law Update: Harper v. Sirius XM Radio, LLC – Angela Reddock-Wright, October 3, 2025.

- AI in the Workplace: US Legal Developments – Cooley Alert, September 4, 2025.

- Another Employer Faces AI Hiring Bias Lawsuit: 10 Actions You Can Take to Prevent AI Litigation – Fisher Phillips, August 15, 2025.

- Artificial Intelligence Bias: Harper v. Sirius XM Challenges Algorithmic Discrimination in Hiring – Epstein Becker Green, October 17, 2025.

- Attorney General James Stops SiriusXM from Trapping New York Customers in Unwanted Subscriptions – Office of the New York State Attorney General, November 22, 2024.

- Campbell, et al. v. Sirius XM Radio Inc., Case No. 2:22-cv-2261-CSB-EIL (C.D. Ill.) – $28M TCPA Settlement.

- Harper v. Sirius XM Radio, LLC, Case No. 2:25-cv-12403-TGB-APP (E.D. Mich.) – Filed August 4, 2025.

- Howard Stern speaks out after 1993 blackface video resurfaces – Global News, January 16, 2025.

- Mobley v. Workday and AI Discrimination – Columbia Black Pre-Law Journal, December 6, 2025 (Referencing N.D. Cal. Case No. 3:23-cv-00770).

- SiriusXM Radio faces class action on AI discrimination – Staffing Industry Analysts, September 2, 2025.

📥 AI Fairness 101 — Real-World Incidents

Related in this cluster

- When an Algorithm Broke Thousands of Families: The Netherlands Child Welfare Scandal

- Access Denied: How India’s Digital ‘Cure-All’ Became a Real-World Fairness Crisis

- The Golden Touch of Ruin: How Michigan’s MiDAS Algorithm Falsely Accused 40,000 People of Fraud

- The COMPAS Algorithm Scandal: When AI Decides Who Goes to Jail

- The Optum Healthcare Algorithm Bias Against Black Patients (2019)

- When Algorithms Decide Who Recovers: The UnitedHealth nH Predict Case

- The Algorithmic Gender Bias — Lessons from the Amazon AI Hiring Failure

- AI Hiring Gone Wrong: How Eightfold’s Social Media Profiling Sparked a Fairness and Consent Crisis

- How AI Bias Locked Out Millions of Job Seekers (A Case Study on Mobley v. Workday)

- Browse all AI Fairness posts

🔎 Explore the AI Fairness 101 Series

This post is part of the AI Fairness 101 - Real-World Incidents learning track.

Stay tuned - new posts every week.

💬 Join the Conversation

Have thoughts, experiences, or questions about AI fairness? Share your comments, discuss with global experts, and connect with the community:

👉 Reach out via the Contact page

📧 Write to us: [email protected]

🌍 Follow GlobalSouth.AI

Stay connected and join the conversation on AI governance, fairness, safety, and sustainability.

- LinkedIn: https://linkedin.com/company/globalsouthai

- Substack Newsletter: https://newsletter.globalsouth.ai/

Subscribe to stay updated on new case studies, frameworks, and Global South perspectives on responsible AI.

Related Posts

When Algorithms Decide Who Recovers: The UnitedHealth nH Predict Case

In 2023, a lawsuit revealed how UnitedHealth used an AI system to determine when elderly patients should stop receiving care. The nH Predict case highlights how cost-driven algorithms can override clinical judgment and introduce systemic bias in healthcare decisions. This case raises critical questions for policymakers especially in the Global South about the risks of scaling AI without adequate oversight.

The Optum Healthcare Algorithm Bias Against Black Patients (2019)

A 2019 case study of the Optum healthcare algorithm showing how proxy bias led to racial disparities and under-served Black patients.

The Algorithmic Gender Bias — Lessons from the Amazon AI Hiring Failure

Amazon built an AI to find the best candidates. It ended up filtering out women. Amazon’s hiring tool is a clear example of how gender bias can be embedded and amplified through algorithms. In the Global South, the risks are even higher.