The Optum Healthcare Algorithm Bias Against Black Patients (2019)

A 2019 case study of the Optum healthcare algorithm showing how proxy bias led to racial disparities and under-served Black patients.

AI Fairness 101 - Real-World Incidents

Part 5 of 7

Table of Contents

- 🎥 Explained: The Optum Healthcare Algorithm Bias Against Black Patients

- AI Fairness 101 — Real Incident (2019)

- Optum Healthcare Algorithm: When Proxy Becomes Bias

- Related in this cluster

- 📥 AI Fairness 101 — Real-World Incidents: The Optum Proxy Bias against Black Patients Deck (PDF)

- 🔎 Explore the AI Fairness 101 Series

- 💬 Join the Conversation

- 🌍 Follow GlobalSouth.AI

- Subscribe to stay updated on new case studies, frameworks, and Global South perspectives on responsible AI.

🎥 Explained: The Optum Healthcare Algorithm Bias Against Black Patients

AI Fairness 101 — Real Incident (2019)

Optum Healthcare Algorithm: When Proxy Becomes Bias

⚠️ Key Takeaway

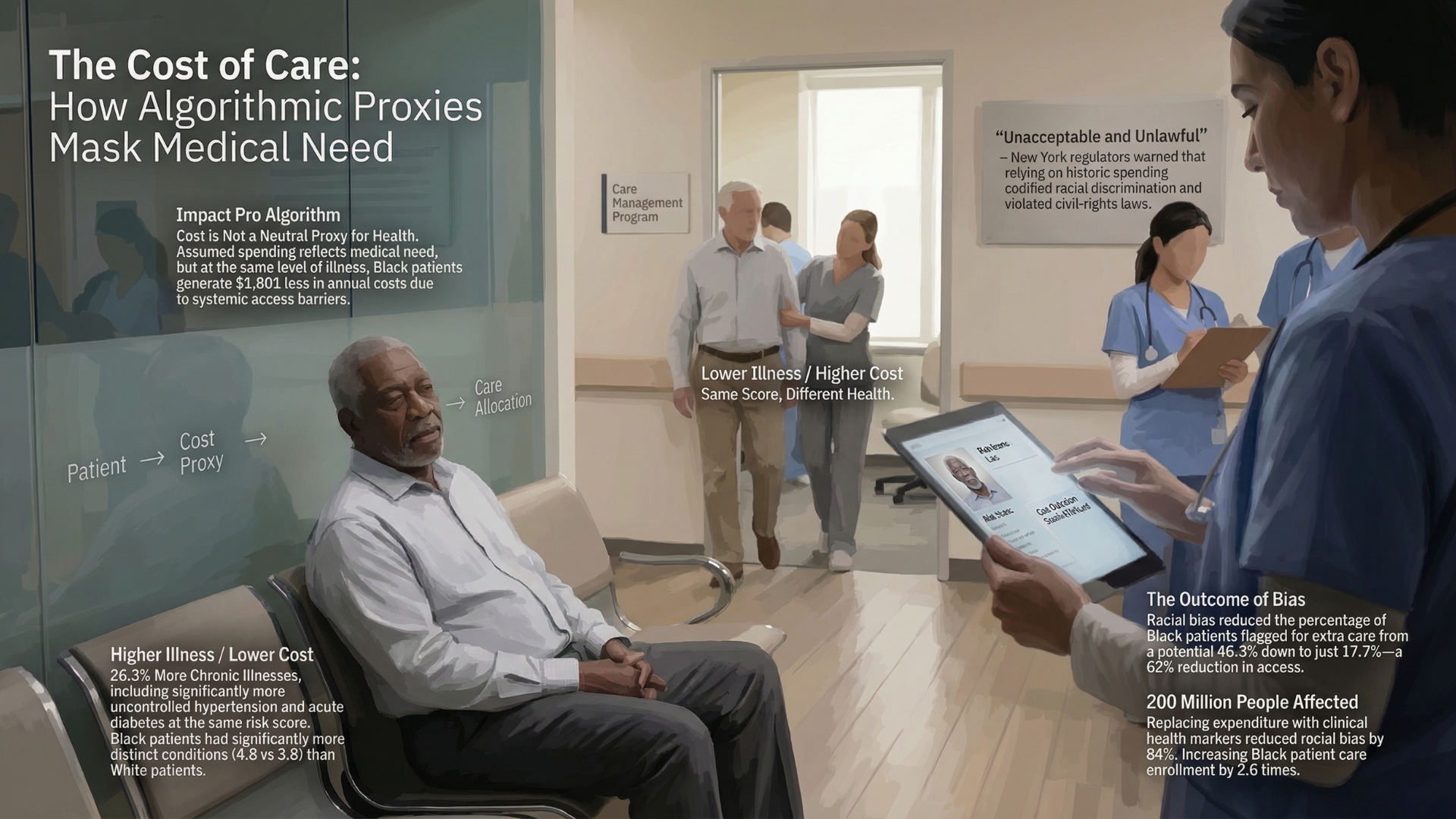

The Optum system predicted future healthcare spending, not actual medical need. In an unequal healthcare system, that made structural disadvantage look like lower risk.

- 💰 Proxy used: future healthcare spending

- ❤️ Actual goal: identify patients with the greatest medical need

- 📈 Estimated fix impact: Black patients receiving extra support could rise from 17.7% to 46.5%

- 👥 Validation scale: roughly 3.7 million patients

1. What Happened 🏥

In 2019, researchers uncovered a critical failure in a widely used healthcare algorithm developed by Optum, part of UnitedHealth Group. The system was deployed across hospitals in the United States to identify patients who should be enrolled in high-risk care management programs—intensive interventions designed for those with the greatest medical need.

The algorithm’s core logic was straightforward: it predicted future healthcare spending and used that prediction as a proxy for identifying high-need patients. In operational settings, patients above certain risk thresholds were automatically flagged for additional care.

However, a landmark study led by Ziad Obermeyer revealed that this proxy was fundamentally flawed. Because Black patients historically incurred lower healthcare costs—due to unequal access, structural barriers, and differences in care utilization—the algorithm systematically assigned them lower risk scores than equally sick White patients.

Notably, the researchers did not initially name the vendor, emphasizing that the issue reflected a broader industry practice. However, regulators later identified the system as Optum’s “Impact Pro.”

In response, the New York State Department of Financial Services and the New York State Department of Health issued a joint letter to UnitedHealth Group, warning that the system’s outcomes could be discriminatory.

The model was good at predicting cost. The problem was that cost was the wrong target.

2. Impact ⚠️

The consequences of this design choice were both measurable and systemic.

At the same algorithmic risk score, Black patients were significantly sicker than White patients when evaluated using clinical indicators such as number of chronic conditions. In one high-risk category, Black patients had approximately 26 percent more chronic illnesses than their White counterparts.

The root of this disparity lay in the cost proxy itself. At equivalent levels of illness, Black patients generated roughly $1,800 less in annual healthcare spending. The algorithm interpreted this lower spending as lower need, thereby deprioritizing patients who were, in reality, more vulnerable.

This distortion translated directly into unequal access to care. The study estimated that correcting the bias would increase the proportion of Black patients receiving additional support from 17.7 percent to 46.5 percent—more than a 2.5-fold increase.

The bias was not limited to a single dataset. Validation on a population of approximately 3.7 million patients confirmed that the issue was systemic, not incidental.

Regulators described the outcomes as “unacceptable” and “unlawful,” underscoring the real-world implications of algorithmic bias in healthcare decision-making.

3. Lifecycle Failure 🧭

The Optum case illustrates a failure that originates not in the algorithm itself, but in how the problem was framed:

- 🧩 Problem formulation: Developers chose healthcare spending as a proxy for health need. This introduced label bias, because the target variable itself reflected social inequity.

- 🗂️ Data layer: The model was trained on claims data, which captures healthcare utilization rather than underlying disease burden. Populations with limited access to care were therefore underrepresented in the signal.

- 🤖 Model development: The system performed as intended. It accurately predicted future costs, but that success was misaligned with the real use case.

- 🧪 Validation: Performance was assessed mainly through predictive accuracy, without systematic checks across racial groups.

- 🏥 Deployment: The model became a gatekeeping tool for additional care, which institutionalized the bias inside clinical workflows.

An AI system can be technically sound and still produce inequitable outcomes if the underlying objective is flawed.

4. Bias Types 🧪

The Optum incident reflects multiple interacting forms of bias rather than a single point failure:

- ⚖️ Label bias: healthcare cost failed to capture real health need.

- 🕰️ Historical bias: the training data reflected longstanding disparities in access and treatment.

- 👥 Representation bias: minority populations were differently represented in healthcare datasets.

- 🏹 Allocation bias: the model’s outputs directly shaped who received extra care.

- 🧠 Automation bias: institutions trusted the score enough that the deeper problem went unchallenged.

5. Global South Lens 🌍

While the Optum incident occurred in the United States, its underlying mechanism is highly relevant to Global South contexts.

In many countries, healthcare systems are characterized by high out-of-pocket expenditure, uneven access, and fragmented service delivery. In such environments, spending is even less reliable as a proxy for need.

In India, for example, digital health systems and insurance schemes such as those managed by the National Health Authority generate large volumes of claims data. While these datasets enable advanced analytics, they capture only those interactions that occur within the formal healthcare system.

Populations that face barriers to access—whether due to income, geography, gender, or social factors—are systematically underrepresented. If models are trained on such data using cost-based proxies, they risk reproducing and amplifying existing inequities.

The implication is clear: in contexts where access is unequal, models trained on utilization data are effectively modeling access, not need.

6. Bigger Picture 🔎

The Optum case illustrates a broader principle that extends beyond healthcare:

AI systems inherit the biases of what they measure.

When proxies are used to simplify complex concepts, they carry embedded assumptions about the world. If those assumptions are not critically examined, they can lead to outcomes that are both inequitable and difficult to detect.

The study demonstrated that significant bias reduction—up to 84 percent—was achievable not through complex algorithmic adjustments, but by redefining the target variable to better reflect actual health need.

This finding has important implications for AI governance. It suggests that fairness cannot be treated as a post-hoc adjustment, but must be embedded in the design of the system, starting with the choice of objective and data.

Frameworks such as the National Institute of Standards and Technology AI Risk Management Framework and the UNESCO Recommendation on the Ethics of Artificial Intelligence emphasize this lifecycle approach, highlighting the need for fairness to be actively managed rather than assumed.

Ultimately, the Optum incident is not an isolated failure. It is a warning about a recurring pattern in AI systems: the substitution of measurable proxies for meaningful outcomes.

If left unexamined, such substitutions can transform efficiency tools into mechanisms of exclusion.

References

Obermeyer et al. (2019), Dissecting racial bias in an algorithm used to manage the health of populations https://www.science.org/doi/10.1126/science.aax2342

Nature (2019) — Algorithmic bias in healthcare https://www.nature.com/articles/d41586-019-03228-6

WHO (2021) — Ethics and governance of AI for health https://www.who.int/publications/i/item/9789240029200

World Bank — Out-of-pocket health expenditure https://data.worldbank.org/indicator/SH.XPD.OOPC.CH.ZS

NIST AI Risk Management Framework https://www.nist.gov/itl/ai-risk-management-framework

UNESCO AI Ethics Recommendation https://unesdoc.unesco.org/ark:/48223/pf0000381137

Related in this cluster

- When an Algorithm Broke Thousands of Families: The Netherlands Child Welfare Scandal

- Access Denied: How India’s Digital ‘Cure-All’ Became a Real-World Fairness Crisis

- The Golden Touch of Ruin: How Michigan’s MiDAS Algorithm Falsely Accused 40,000 People of Fraud

- The COMPAS Algorithm Scandal: When AI Decides Who Goes to Jail

- When Algorithms Decide Who Recovers: The UnitedHealth nH Predict Case

- The Algorithmic Gender Bias — Lessons from the Amazon AI Hiring Failure

- Browse all AI Fairness posts

📥 AI Fairness 101 — Real-World Incidents: The Optum Proxy Bias against Black Patients Deck (PDF)

👉 Download a detailed deck: The Optum Proxy Bias against Black Patients (PDF)

👉 Download a visual deck: The Optum Proxy Bias against Black Patients (PDF)

🔎 Explore the AI Fairness 101 Series

This post is part of the AI Fairness 101 — Real-World Incidents learning track.

Stay tuned — new posts every week!

💬 Join the Conversation

Have thoughts, experiences, or questions about AI fairness? Share your comments, discuss with global experts, and connect with the community:

👉 Reach out via the Contact page

📧 Write to us: [email protected]

🌍 Follow GlobalSouth.AI

Stay connected and join the conversation on AI governance, fairness, safety, and sustainability.

- LinkedIn: https://linkedin.com/company/globalsouthai

- Substack Newsletter: https://newsletter.globalsouth.ai/

Subscribe to stay updated on new case studies, frameworks, and Global South perspectives on responsible AI.

Related Posts

When an Algorithm Broke Thousands of Families: The Netherlands Child Welfare Scandal

How a design-phase failure in the Dutch childcare fraud algorithm created one of the worst AI governance disasters in Europe — and what the Global South must learn from it.

The Algorithmic Gender Bias — Lessons from the Amazon AI Hiring Failure

Amazon built an AI to find the best candidates. It ended up filtering out women. Amazon’s hiring tool is a clear example of how gender bias can be embedded and amplified through algorithms. In the Global South, the risks are even higher.

Access Denied: How India's Digital 'Cure-All' Became a Real-World Fairness Crisis

How Aadhaar’s promise of digital inclusion turned into one of the largest algorithmic exclusion crises in the world.